Abstract

Few-shot domain adaptation to multiple domains aims to learn a complex image distribution across multiple domains from a few training images. A naïve solution here is to train a separate model for each domain using few-shot domain adaptation methods. Unfortunately, this approach mandates linearly-scaled computational resources both in memory and computation time and, more importantly, such separate models cannot exploit the shared knowledge between target domains. In this paper, we propose DynaGAN, a novel few-shot domain-adaptation method for multiple target domains. DynaGAN has an adaptation module, which is a hyper-network that dynamically adapts a pretrained GAN model into the multiple target domains. Hence, we can fully exploit the shared knowledge across target domains and avoid the linearly-scaled computational requirements. As it is still computationally challenging to adapt a large-size GAN model, we design our adaptation module light-weight using the rank-1 tensor decomposition. Lastly, we propose a contrastive-adaptation loss suitable for multi-domain few-shot adaptation. We validate the effectiveness of our method through extensive qualitative and quantitative evaluations.

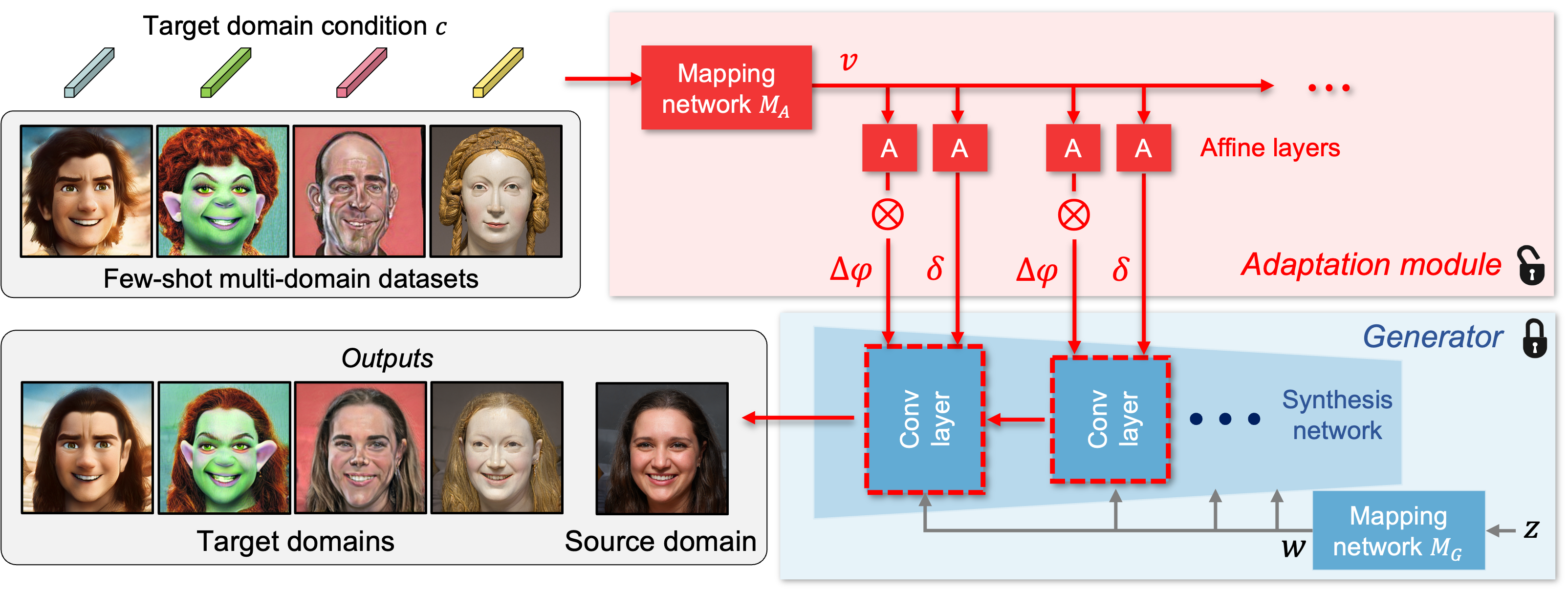

Framework

DynaGAN network consists of a generator pretrained on a source domain and an adaptation module that dynamically modulates the generator parameters. DynaGAN takes a one-hot vector $c$ encoding a target domain as an input, projects it to a continuous representation $\upsilon$ through a mapping network $M_A$, and estimates modulation parameters $\Delta \varphi$ and $\delta$ through affine layers. These parameters modulate the weight of each convolutional layer in the generator. As a result, the generator is adapted to the target domain.

Links

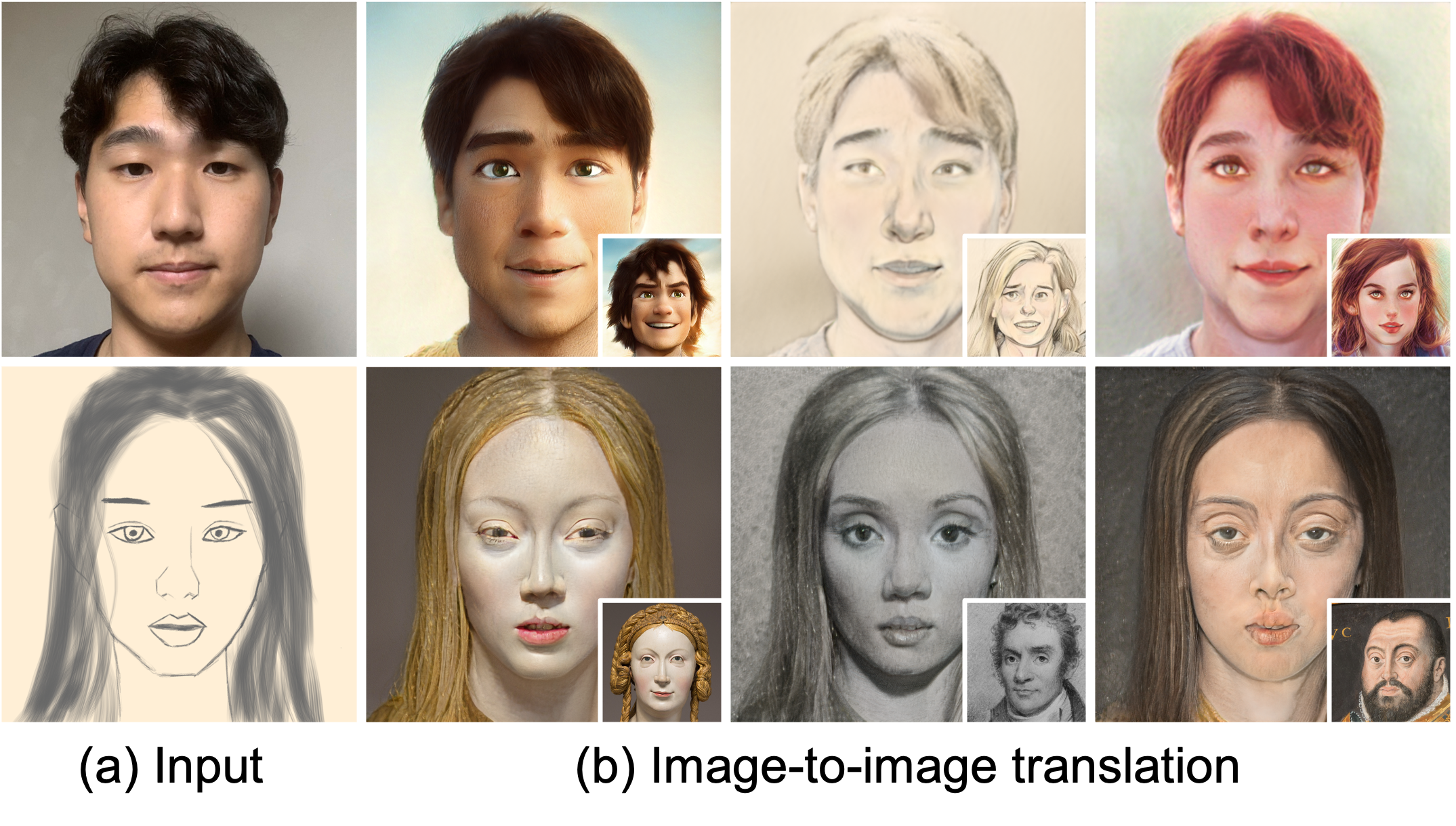

Results

Citation

@inproceedings{Kim2022DynaGAN,

title = {DynaGAN: Dynamic Few-shot Adaptation of GANs to Multiple Domains},

author = {Seongtae Kim and Kyoungkook Kang and Geonung Kim and Seung-Hwan Baek and Sunghyun Cho},

booktitle = {Proceedings of the ACM (SIGGRAPH Asia)},

year = {2022}

}